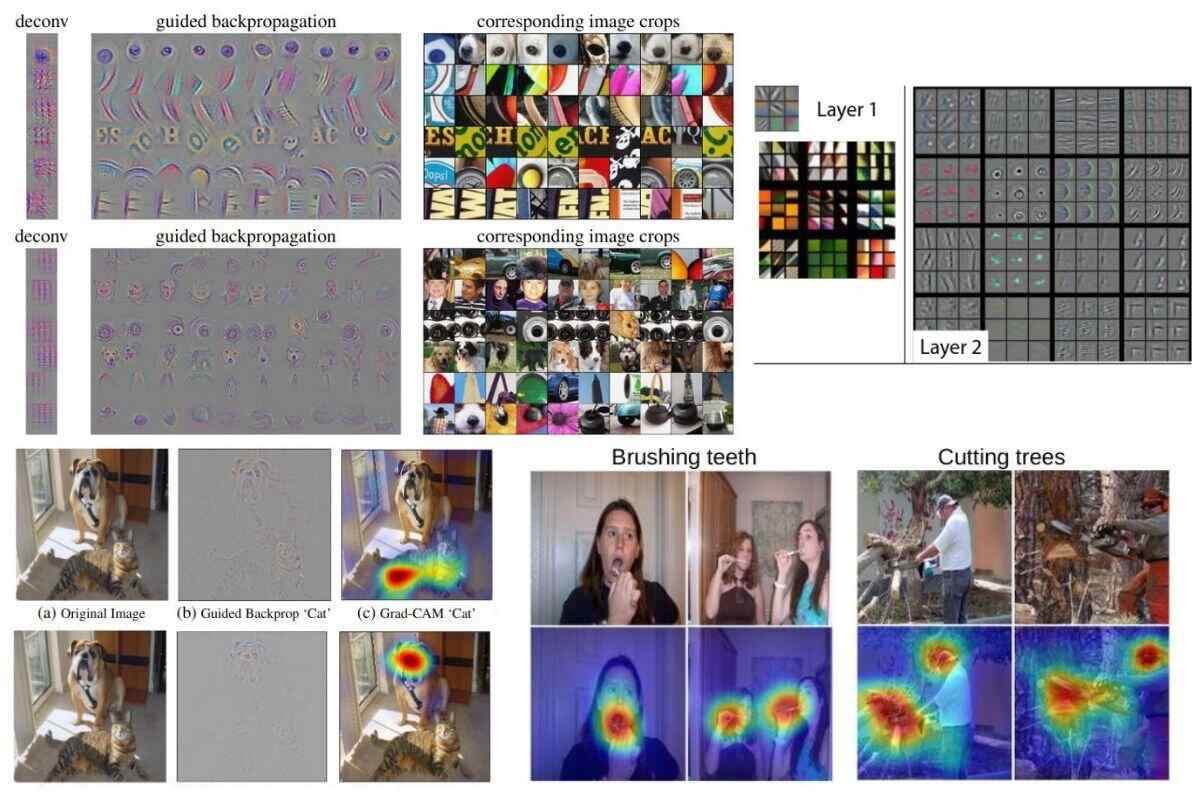

However, the focus of this post will be on saliency maps created from trained CNNs. There are traditional computer vision saliency detection algorithms (e.g. A saliency map is any visualization of an image in which the most salient/most important pixels are highlighted. Google defines “salient” as “most noticeable or important.” The concept of a “saliency map” is not limited to neural networks. You can see the code that was used to create these saliency maps at pytorch-cnn-visualizations/src/vanilla_backprop.py. “Vanilla Backpropagation Saliency” is the result of converting the “Colored Vanilla Backpropagation” image into a grayscale image. This figure is from utkuozbulak/pytorch-cnn-visualizations :Ībove, “Colored Vanilla Backpropagation” means a saliency map created with RGB color channels. Saliency maps are shown both in color and in grayscale. The figure below shows three images - a snake, a dog, and a spider - and what the saliency maps look like for each image. For a summary of why that’s useful, see this post. In this post I will describe the CNN visualization technique commonly referred to as “saliency mapping” or sometimes as “backpropagation” (not to be confused with backpropagation used for training a CNN.) Saliency maps help us understand what a CNN is looking at during classification.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed